RubixKube in Action: Multi-Failure Detection

See RubixKube handle multiple simultaneous failures - just like real production incidents. This tutorial demonstrates the power of the Agent Mesh and Memory Engine working together.Real Scenario: We created 3 different pod failures at once. RubixKube detected all of them, prioritized by severity, and provided specific guidance for each.

The Production-Like Scenario

In our demo, we deployed 3 pods that would fail in different ways:ImagePullBackOff

broken-image-demo

Invalid container registry - image doesn’t existOOMKilled

memory-hog-demo

Memory limit 50Mi, but needs 100Mi - gets killed repeatedlyCrashLoop

crash-loop-demo

Application exits with code 1 - crashes on startupRubixKube’s Response (Real Data)

Within ** 2 minutes** , RubixKube detected ALL THREE failures:

What Happened

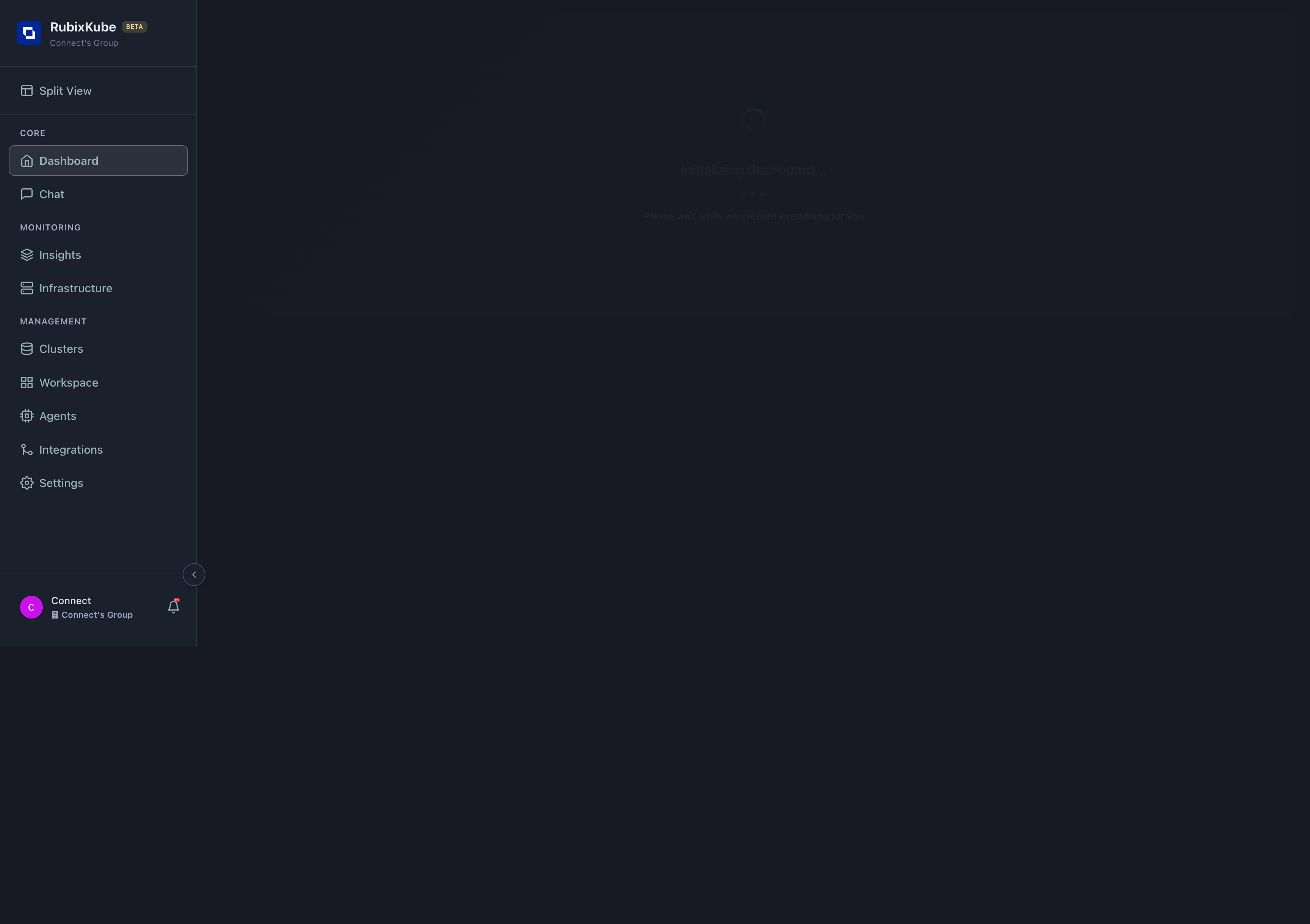

Dashboard Metrics Changed: - ** Active Insights:** - ** Dashboard Metrics Changed:** (three separate issues detected)- Notifications: 0 → ** 20+** (detailed event stream)

- System Health: 100% (overall cluster still healthy - isolated failures)

- Agents: 3/3 Active (all AI agents working)

Severity: medium | 2 minutes ago Out of memory (OOMKilled) detected on Pod/memory-hog-demo Severity: HIGH | 2 minutes ago

Incident #2: OOMKilled in memory-hog-demo

Details: - ** Details:** Out of Memory- Restart Count: 3

- Severity: HIGH

- Memory Limit: 50Mi (too low)

- Memory limit set to 50Mi

- Kubernetes killed container to protect node

- Monitor for memory leaks

- Consider HPA for auto-scaling

Incident #3: CrashLoop in memory-hog-demo

Related Incident: - This crash loop is ** Related Incident:** the OOMKilled- RubixKube shows the correlation

- Fixing the memory issue resolves both

Agent Mesh Collaboration

Behind the scenes, multiple agents worked together:Observer Agent

Detected all 3 pod failures within 30 seconds of each occurringSent events to RCA Pipeline Agent for analysis

RCA Pipeline Agent

Analyzed each incident:

- Gathered logs, events, pod specs

- Identified root causes

- Calculated severity

- Generated suggestions

Memory Agent

Recalled similar past incidents (if any existed)Stored these new incidents for future pattern matching

Total analysis time: 60-90 seconds for all 3 incidents

Key Observations

1. Parallel Detection

All 3 failures detected simultaneously - no delay between them:| Incident | Detection Time | Severity | RCA Progress |

|---|---|---|---|

| crash-loop-demo | 01:58:00 AM | Medium | 60% |

| memory-hog-demo (crash) | 01:58:05 AM | Medium | 60% |

| memory-hog-demo (OOM) | 01:58:10 AM | HIGH | 80% |

2. Severity Prioritization

RubixKube ranked issues appropriately: - ** RubixKube ranked issues appropriately:** OOMKilled (resource exhaustion, node impact risk)- Medium: CrashLoops (isolated pod failures)

3. Event Correlation

RubixKube connected related incidents: - Recognized memory-hog-demo’s crash loop is ** RubixKube connected related incidents:** OOMKilled- Suggested fixing the root cause (memory) would resolve both

Real-World Parallels

This scenario mirrors actual production incidents:Bad Deployment Scenario

Bad Deployment Scenario

Real example: - New version deployed with typo in image tag (ImagePullBackOff)

- Same version has memory leak (OOMKilled after 10 minutes)

- Database connection pool exhausted (CrashLoop)

- Prioritize by severity

- Show which are related

- Suggest rollback to previous version

Resource Misconfiguration

Resource Misconfiguration

Real example: - HPA scaled deployment to 10 replicas

- Namespace quota only allows 8

- 2 pods stuck pending

- Remaining 8 pods OOMKilling due to increased load

- Identify OOM root cause

- Suggest quota increase OR replica reduction

- Show which pods are affected

Cascading Failure

Cascading Failure

Real example: - Database pod OOMKilled

- API pods start failing (can’t connect to DB)

- Frontend shows 500 errors

- Load balancer marks all backends unhealthy

- Identify DB memory as root cause

- Show dependency graph

- Suggest fixing DB first (not the symptoms)

Cleanup

Remove the demo pods:- Activity Feed shows cleanup

- Active Insights returns to 0

- Memory retains the learnings for future incidents

What You Learned

Multi-Incident Handling

RubixKube detects and analyzes multiple failures simultaneously

Intelligent Prioritization

Severity ranking helps you focus on critical issues first

Event Correlation

Related incidents are connected - fix root causes, not symptoms

Comprehensive Analysis

Each incident gets detailed RCA with suggestions